When working with physical systems, your data acquisition has to adapt to the properties of what you are measuring. Some systems are inherently dynamic which makes data acquisition more complicated. This article will lead you through some of the considerations for these dynamic measurements.

What Are Dynamic Measurements?

In general dynamic measurements are high-speed measurements. However, that definition has a problem as high-speed means different things to different people!

I would instead, consider what features you are trying to extract. In dynamic measurements, our interest is in the shape of the signal over time, or in the frequency content.

This is different from a more static measurement where we generally are only interested in the current value such as in process control.

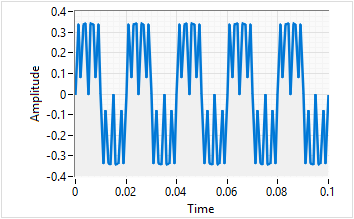

A Dynamic Signal A Static Signal

In general, there are physical phenomena that are usually dynamic:

- Pressure - In an engine or a compressor, pressure changes rapidly.

- Electrical - Voltage or Current can change very fast in power systems or motor coils.

- Vibration - Seen either as acceleration or mechanical strain, we often look at the frequencies to identify causes.

- Sound - Similar to vibration, we are interested in the frequency components of noise to identify the source.

There are of course many others, but these are some of the most common we see in validation systems.

What Makes Them Special?

Dynamic means that they are fast changing which makes them more challenging to use.

It begins with the acquisition rates which tend to be >1 kHz. These higher rates require hardware with faster components, lower noise and higher bandwidth connections. With very high bandwidth systems such as electrical signals, we might even need to acquire at >1 MHz rates.

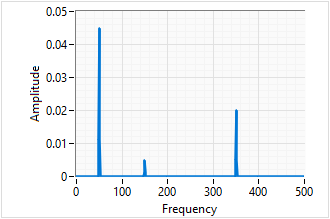

Then we have the analysis. Unlike static measurements where we are usually feeding data to a user interface directly, dynamic systems often require more pre-processing such as an FFT to get frequency information. Often we need to extract the data of interest before showing it to the user such as a peak detection algorithm.

A Repeating Signal Sampled at 1kHz Frequency analysis shows three different frequency components.

Finally, we must think about storage. Do we need all of the raw data? Files will be much larger than static acquisition systems. Standard tools for visualising the data such as Excel will often break at these data volumes, so we need to consider more engineering focused applications like DIAdem or custom interfaces to provide users with the right data at the right time.

A great example of this coming together is our work with Lontra to advance the design of their blade compressor®. We had to capture tightly synchronised data at 25kHz so they could understand the real world dynamics of their design. This included real-time processing of key performance values and a separate high-speed log to keep all of the raw data.

Key Requirements for Dynamic Data Acquisition

So, if it sounds like what you want to measure is a dynamic system, what must you consider when specifying a system:

1. Acquisition Bandwidth

The data acquisition board must provide a high enough sampling rate and correct filtering schemes.

Nyquist's theorem says the sampling rate must be 2 times the highest frequency of interest. In reality, we tend to aim for at least 5-10 times the highest frequency of interest to get good shape and amplitude data. That is the easy part.

However, there is also the thorny issue of aliasing as well. Aliasing occurs when higher frequency components appear at a different frequency if you use a slower acquisition rate. For example, if there is a 50kHz component and you use a 40kS/s acquisition rate, it will appear that there is a 30kHz signal that doesn't exist. Once the data is digitised, there is nothing you can do to correct it so you must have analogue anti-aliasing filters if this is a concern. Most NI cards for dynamic signals have these built in.

2. System Bandwidth

Depending on the speed and the channel count, it isn't hard to overwhelm slower interfaces such as USB 2.0.

Thanks to consumer technology these have come a long way now, but for high throughput systems, you may need to consider PXIe cards (typically 1 GB/s) over compactDAQ systems (around 12-60MB/s).

3. Synchronisation

If you are acquiring data from more than one module, then there can be time shifts between them. With static data, a 100 us time shift may not matter, but with dynamic systems, this can be significant.

Generally speaking, if you have multiple modules in a single chassis, this synchronisation is straightforward with NI's DAQmx driver. However, if you have devices in multiple chassis, then additional wiring is required to share additional synchronisation signals.

4. High-Performance Data Storage

I find most data formats are either easy to read or easy to write. When working with dynamic systems, you will probably have to focus a bit more on easy to write to get the desired performance. While you can open CSV files in almost any data software, large files can cause performance issues, so a binary format is often preferred. I tend to use TDMS as a compromise which has a binary data format for speed but can still store information about how the file is structured.

Questions

I hope this gives you a good introduction to some of the challenges that could face you when working with dynamic data acquisition.

We have worked with these types of systems for many years now so if you have questions or would like our help then contact us.